The Social Media Reckoning: How a Landmark Jury Verdict Against Meta and YouTube Could Reshape Digital Responsibility

The Verdict and Its Immediate Fallout After over 40 hours of deliberation across nine grueling days, the Los Angeles jury delivered a decisive, though not unanimous, verdict. They found both Meta...

The Verdict and Its Immediate Fallout

After over 40 hours of deliberation across nine grueling days, the Los Angeles jury delivered a decisive, though not unanimous, verdict. They found both Meta (owner of Instagram and Facebook) and Google-owned YouTube negligent in the design and operation of their products. Crucially, the jury determined this negligence was a "substantial factor" in causing harm and that the companies failed to warn users, particularly minors, of the platforms' potential dangers.

The jury awarded $3 million in compensatory damages to Kaley, apportioning responsibility at 70% to Meta ($2.1 million) and 30% to YouTube ($900,000). However, the financial and legal ramifications are far from settled. The trial now moves to a second phase to determine punitive damages, intended to punish the companies for egregious conduct and deter future wrongdoing. Both Meta and Google have immediately promised appeals, ensuring a protracted legal battle.

The case's status as a "bellwether" is its most critical aspect. In complex multidistrict litigation (MDL), a bellwether trial serves as a test case, providing a preview of how other juries might rule in similar suits. This verdict sends a powerful signal to the hundreds of other families awaiting their day in court and fundamentally alters the settlement calculus for the entire litigation.

The Plaintiff's Story and the Science of Addiction

The trial’s emotional core was Kaley’s testimony. She described a digital immersion that began in early childhood, alleging that features like infinite scroll, autoplay, and personalized notifications fostered an addictive cycle she could not break. Her legal team argued this was no accident but the result of intentional product design choices that prioritized "maximizing engagement" over user well-being.

Her experience was framed not as a failure of individual willpower, but as a predictable outcome of manipulative design. The lawsuit connected her mental health struggles directly to the platforms' architecture, which leverages variable rewards (likes, new content) and eliminates natural stopping cues—a formula well-documented in behavioral psychology to foster compulsive use. Expert witnesses presented scientific arguments aligning platform design with recognized mechanisms of behavioral addiction, challenging the long-held industry stance that social media is merely a passive tool. For gamers, this psychology is intimately familiar; it's the same principle underlying the dopamine-driven loops of loot boxes, gacha mechanics, and endless progression grinds designed to maximize player retention and spending.

The Legal Strategy: Bypassing Section 230

To secure this unprecedented verdict, Kaley's legal team had to solve a puzzle that had stymied plaintiffs for decades: how to get past Big Tech's favorite legal shield. Their successful maneuver was to navigate around Section 230 of the Communications Decency Act. For decades, this law has served as a nearly impenetrable shield for tech companies, protecting them from liability for content posted by users. The plaintiffs' lawyers, however, aimed their litigation not at user-generated content, but at the product itself.

Their argument focused on algorithmic curation and addictive design features—elements controlled entirely by the companies. By alleging that the harm stemmed from Meta's and Google's own choices in building a dangerous product, akin to a defective physical good, the lawsuit effectively sidestepped the Section 230 defense. This strategic pivot marks a potential blueprint for future litigation against platform giants, and by extension, could provide a model for lawsuits targeting core game design and monetization systems rather than in-game content.

This verdict arrived amidst a multi-front legal assault on Meta. Just one day prior, on March 24, 2026, a New Mexico jury ordered the company to pay $375 million for violating state child exploitation laws. Together, these rulings depict an industry under unprecedented judicial scrutiny.

Corporate Defense and Industry Ripples

In the wake of the verdict, the corporate responses were swift and defiant. A Meta spokesperson stated the company "respectfully disagree[s] with the verdict and are evaluating our legal options," arguing that Kaley's mental health struggles predated her social media use. Google, defending YouTube, announced its intent to appeal and attempted to distance itself from the core accusation, stating the case "misunderstands YouTube" as it is a "streaming platform, not a social media site"—a distinction the jury clearly rejected.

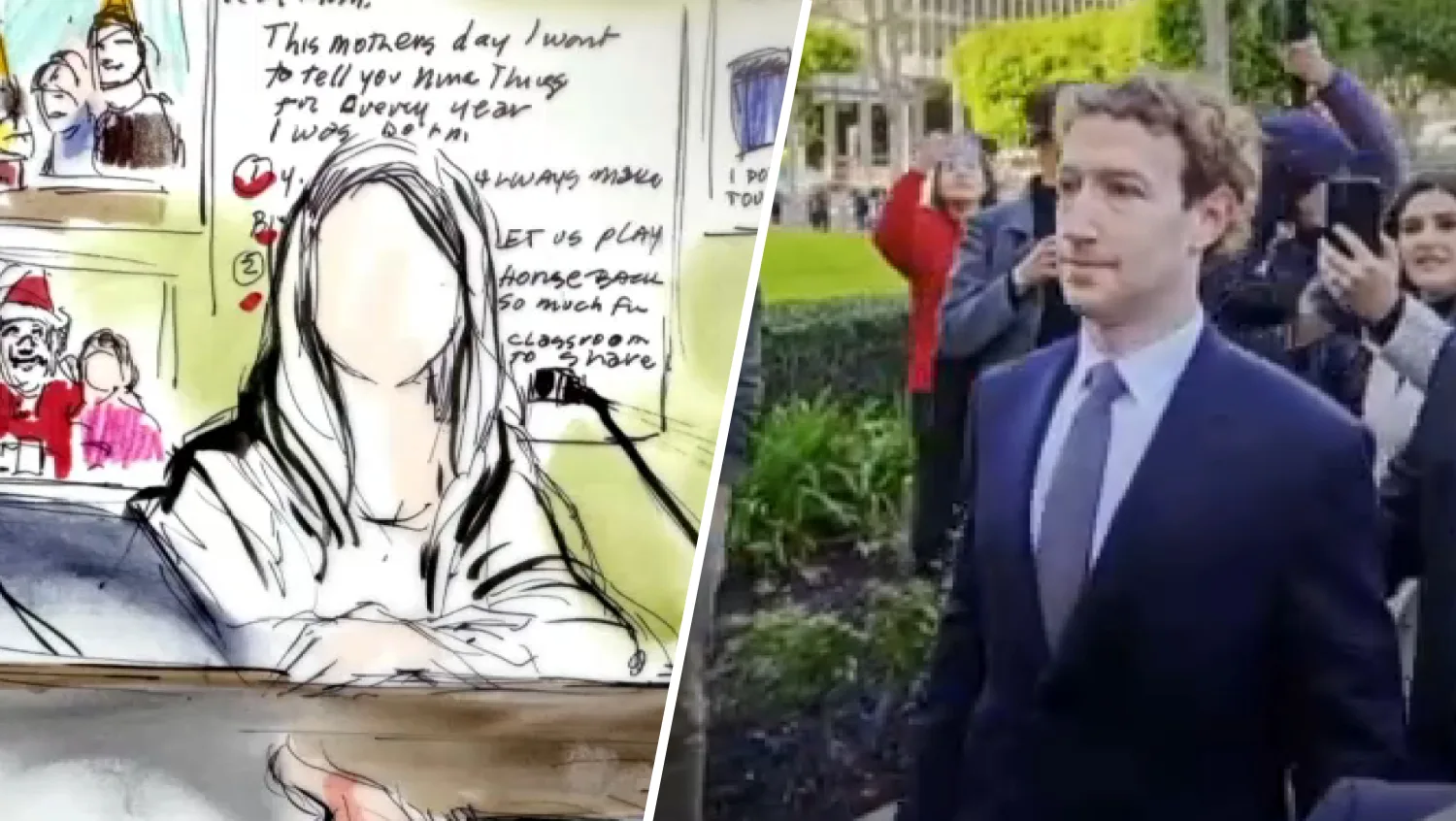

The trial featured high-profile testimony from Meta CEO Mark Zuckerberg and Instagram head Adam Mosseri. Their appearances, often under pointed questioning, revealed internal priorities focused on growth and engagement metrics. For courtroom observers and the public, this testimony peeled back the curtain on a corporate culture that plaintiffs argued valued time-on-platform above all else, including the health of its youngest users.

The broader industry is already feeling the shockwaves. Before this trial began, fellow defendants TikTok and Snap chose to settle, avoiding a public jury trial. This verdict validates their decision to exit and increases pressure on all social media companies to re-evaluate their legal exposure and design practices. The landscape has shifted from theoretical risk to tangible, jury-awarded liability. The gaming industry, no stranger to controversies over addictive design and monetization, is undoubtedly watching closely, aware that its engagement-driven business models could face similar legal scrutiny.

What Comes Next: Appeals, Legislation, and Platform Changes

The immediate future is a legal labyrinth. The appeals process will take years, potentially reaching the Supreme Court. However, the mere existence of this plaintiff-friendly verdict changes the dynamic. It empowers other litigants and strengthens the hand of regulators and legislators.

On Capitol Hill, this ruling will undoubtedly influence the debate around long-pending bills like the Kids Online Safety Act (KOSA), which seeks to impose a legal "duty of care" on platforms to protect minors. A jury finding that such a duty was breached provides powerful ammunition for legislative action. Such legislation could establish broad digital safety standards that impact not just social media, but any interactive online service, including games.

Regardless of the final appellate outcome, the verdict may catalyze mandatory design changes. Platforms could be forced to implement features they have long resisted: true chronological feeds, aggressive usage timers, the disabling of autoplay and engagement-based notifications for minors, and radical transparency about algorithmic operations. The threat of further billion-dollar litigation may prove a more potent motivator for change than public pressure alone. Game developers may similarly feel pressure to preemptively modify or more clearly warn about designs featuring compulsive loops and variable reward schedules.

The March 25 verdict is a watershed moment, both symbolic and substantively legal. It proves that a jury of ordinary citizens can look at the most sophisticated digital products in the world, understand their capacity for harm, and find their creators liable. While appeals will drag on, the precedent is now etched into the legal record. This case represents a profound cultural shift, moving the central question from one of user self-control to one of corporate accountability. The jury has spoken, and the message to Silicon Valley is clear: the era of designing without consequence is over. For the gaming world, this verdict serves as a stark precedent. It raises urgent questions about whether studios and publishers could face similar scrutiny for designs that prioritize perpetual engagement over player well-being, potentially reshaping not just social feeds, but game worlds themselves.